Documentation Index

Fetch the complete documentation index at: https://springaicommunity.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

The challenge of evaluating Large Language Model (LLM) outputs is critical for notoriously non-deterministic AI applications, especially as they move into production.

Traditional metrics like ROUGE and BLEU fall short when assessing the nuanced, contextual responses that modern LLMs produce.

Human evaluation, while accurate, is expensive, slow, and doesn’t scale.

Enter LLM-as-a-Judge - a powerful technique that uses LLMs themselves to evaluate the quality of AI-generated content. Research shows that sophisticated judge models can align with human judgment up to 85%, which is actually higher than human-to-human agreement (81%).

In this article, we’ll explore how Spring AI’s Recursive Advisors provide an elegant framework for implementing LLM-as-a-Judge patterns, enabling you to build self-improving AI systems with automated quality control.

To learn more about the Recursive Advisors API, check out our previous article: Create Self-Improving AI Agents Using Spring AI Recursive Advisors.

The challenge of evaluating Large Language Model (LLM) outputs is critical for notoriously non-deterministic AI applications, especially as they move into production.

Traditional metrics like ROUGE and BLEU fall short when assessing the nuanced, contextual responses that modern LLMs produce.

Human evaluation, while accurate, is expensive, slow, and doesn’t scale.

Enter LLM-as-a-Judge - a powerful technique that uses LLMs themselves to evaluate the quality of AI-generated content. Research shows that sophisticated judge models can align with human judgment up to 85%, which is actually higher than human-to-human agreement (81%).

In this article, we’ll explore how Spring AI’s Recursive Advisors provide an elegant framework for implementing LLM-as-a-Judge patterns, enabling you to build self-improving AI systems with automated quality control.

To learn more about the Recursive Advisors API, check out our previous article: Create Self-Improving AI Agents Using Spring AI Recursive Advisors.

💡 Demo: Find the full example implementation in the evaluation-recursive-advisor-demo.

Understanding LLM-as-a-Judge

LLM-as-a-Judge is an evaluation method where Large Language Models assess the quality of outputs generated by other models or themselves. Instead of relying solely on human evaluators or traditional automated metrics, the LLM-as-a-Judge leverages an LLM to score, classify, or compare responses based on predefined criteria. Why does it work? Evaluation is fundamentally easier than generation. When you use an LLM as a judge, you’re asking it to perform a simpler, more focused task (assessing specific properties of existing text) rather than the complex task of creating original content while balancing multiple constraints. A good analogy is that it’s easier to critique than to create. Detecting problems is simpler than preventing them. There are two primary LLM-as-a-judge evaluation patterns:- Direct Assessment (Point-wise Scoring): Judge evaluates individual responses, providing feedback that can refine prompts through self-refinement

- Pairwise Comparison: Judge selects the better of two candidate responses (common in A/B testing)

Choosing the Right Judge Model

While general-purpose models like GPT-4 and Claude can serve as effective judges, dedicated LLM-as-a-Judge models consistently outperform them in evaluation tasks. The Judge Arena Leaderboard tracks the performance of various models specifically for judging tasks.Spring AI: The Perfect Foundation

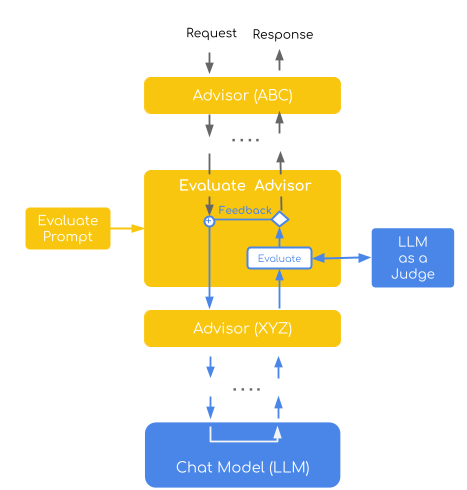

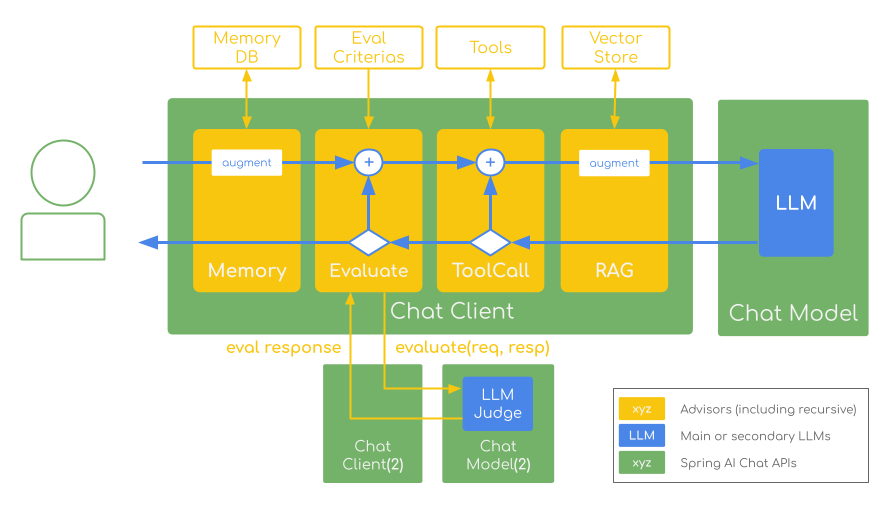

Spring AI’s ChatClient provides a fluent API that’s ideal for implementing LLM-as-a-Judge patterns. Its Advisors system allows you to intercept, modify, and enhance AI interactions in a modular, reusable way. The recently introduced Recursive Advisors take this further by enabling looping patterns that are perfect for self-refining evaluation workflows:SelfRefineEvaluationAdvisor that embodies the LLM-as-a-Judge pattern using Spring AI’s Recursive Advisors.

This advisor will automatically evaluate AI responses and retry failed attempts with feedback-driven improvement: generate response → evaluate quality → retry with feedback if needed → repeat until quality threshold is met or retry limit reached.

Let’s examine the implementation that demonstrates advanced evaluation patterns:

The SelfRefineEvaluationAdvisor Implementation

This implementation demonstrates the Direct Assessment evaluation pattern, where a judge model evaluates individual responses using a point-wise scoring system (1-4 scale). It combines this with a self-refinement strategy that automatically retries failed evaluations by incorporating specific feedback into subsequent attempts, creating an iterative improvement loop.

The advisor embodies two key LLM-as-a-Judge concepts:

This implementation demonstrates the Direct Assessment evaluation pattern, where a judge model evaluates individual responses using a point-wise scoring system (1-4 scale). It combines this with a self-refinement strategy that automatically retries failed evaluations by incorporating specific feedback into subsequent attempts, creating an iterative improvement loop.

The advisor embodies two key LLM-as-a-Judge concepts:

- Point-wise Evaluation: Each response receives an individual quality score based on predefined criteria

- Self-Refinement: Failed responses trigger retry attempts with constructive feedback to guide improvement

Key Implementation Features

Recursive Pattern Implementation The advisor usescallAdvisorChain.copy(this).nextCall(request) to create a sub-chain for recursive calls, enabling multiple evaluation rounds while maintaining proper advisor ordering.

Structured Evaluation Output

Using Spring AI’s structured output capabilities, the evaluation results are parsed into a EvaluationResponse record with rating (1-4), evaluation rationale, and specific feedback for improvement.

Separate Evaluation Model

Uses a specialized LLM-as-a-Judge model (avcodes/flowaicom-flow-judge:q4) with a different ChatClient instance to mitigate model biases.

The spring.ai.chat.client.enabled=false is set to enable Working with Multiple Chat Models.

Feedback-Driven Improvement

Failed evaluations include specific feedback that gets incorporated into retry attempts, enabling the system to learn from evaluation failures.

Configurable Retry Logic

Supports configurable maximum attempts with graceful degradation when evaluation limits are reached.

Putting It All Together

Here’s how to integrate the SelfRefineEvaluationAdvisor into a complete Spring AI application:weather tool generates invalid values in 2/3 of the cases.

The SelfRefineEvaluationAdvisor (Order 0) evaluates response quality and retries with feedback if needed, followed by MyLoggingAdvisor (Order 2) which logs the final request/response for observability.

When run, you would see output like this:

🚀 Try It Yourself: The complete runnable demo with configuration examples, including different model combinations and evaluation scenarios, is available in the evaluation-recursive-advisor-demo project.

Conclusion

Spring AI’s Recursive Advisors make implementing LLM-as-a-Judge patterns both elegant and production-ready. The SelfRefineEvaluationAdvisor demonstrates how to build self-improving AI systems that automatically assess response quality, retry with feedback, and scale evaluation without human intervention. Key benefits include automated quality control, bias mitigation through separate judge models, and seamless integration with existing Spring AI applications.

This approach provides the foundation for reliable, scalable quality assurance across chatbots, content generation, and complex AI workflows.

The critical success factors when implementing the LLM-as-a-Judge technique include:

Key benefits include automated quality control, bias mitigation through separate judge models, and seamless integration with existing Spring AI applications.

This approach provides the foundation for reliable, scalable quality assurance across chatbots, content generation, and complex AI workflows.

The critical success factors when implementing the LLM-as-a-Judge technique include:

- Use dedicated judge models for better performance (Judge Arena Leaderboard)

- Mitigate bias through separate generation/evaluation models

- Ensure deterministic results (temperature = 0)

- Engineer prompts with integer scales and few-shot examples

- Maintain human oversight for high-stakes decisions

⚠️ Important Note

Recursive Advisors are a new experimental feature in Spring AI 1.1.0-M4+. Currently, they are non-streaming only, require careful advisor ordering, and can increase costs due to multiple LLM calls. Be especially careful with inner advisors that maintain external state - they may require extra attention to maintain correctness across iterations. Always set termination conditions and retry limits to prevent infinite loops.

Resources

Spring AI Documentation- Spring AI Recursive Advisors Documentation

- LLM-as-a-judge: a complete guide to using LLMs for evaluations

- Spring AI Advisors API Guide

- ChatClient API Documentation

- EvaluationAdvisor Demo Project

- Judge Arena Leaderboard - Current rankings of best-performing judge models

- Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena - Foundational paper introducing the LLM-as-a-Judge paradigm

- Judge’s Verdict: A Comprehensive Analysis of LLM Judge Capability Through Human Agreement - introduces a two-step benchmark that evaluates 54 LLMs as judges by testing their correlation with human judgment and agreement patterns, revealing that 27 models achieve top-tier performance regardless of size through either human-like or super-consistent judgment behaviors.

- LLMs-as-Judges: A Comprehensive Survey on LLM-based Evaluation Methods

- From Generation to Judgment: Opportunities and Challenges of LLM-as-a-judge (2024) - survey covering the complete landscape of LLM-as-a-Judge with systematic taxonomy and latest challenges

- LLM-as-a-Judge Resource Hub - Central repository with paper lists, tools, and ongoing research

- Preference Leakage: A Contamination Problem in LLM-as-a-judge - Latest research on bias in judge models

- Who’s Your Judge? On the Detectability of LLM-Generated Judgments - Emerging research on judgment detection and transparency